COVID19 presented an unprecedented challenge for modern science/medicine. Faced with a novel infectious disease, doctors, scientists, and health officials rose to the challenge in remarkable speed. That rate at which we went from never having heard of COVID19 to having safe and effective vaccines continues to astound me and is a testament to the power of the scientific method. Dealing with this pandemic was made all the more challenging by a politically-motivated anti-science campaign, with high-ranking government officials spreading one conspiracy theory after another and dangerously misleading the public at every turn.

What really concerns me, however, is that now that the dust has settled and COVID has shifted from a pandemic stage to an endemic stage, many people (possibly even an increasing number of people) are still under the delusion that the scientists got it wrong and the conspiracy theorists got it right. I constantly hear people say things like, “well fact checkers said the COVID vaccines were safe and look how that turned out,” or “’scientists’ said we were crazy for taking a dewormer, but now studies show we were right” or “I’m not opposed to science, but I am opposed to the way it is politically weaponized like it was during COVID.” Indeed, one poll found that a third of Americans think that the COVID vaccines killed thousands of otherwise healthy people.

These sorts of beliefs and comments are completely out of touch with reality and contrary to the facts. They are also extremely dangerous, not only because recommendations like staying up to date with COVID boosters continue to be relevant, but also because this type of thinking affects how people respond to other scientific issues and future disease outbreaks. This erosion in the public trust in science is incredibly damaging and based entirely on lies and conspiracy theories.

Therefore, I want to set the record straight on four of the biggest COVID topics that I see coming up over and over again: vaccines, masks, hydroxychloroquine, and ivermectin. In all four cases, despite popular perception to the contrary, it was the scientists/health officials who got it right, and the conspiracy theorists who were dangerously wrong. That’s not to say, of course, that every recommendation was correct or that every study produced helpful results. There were obviously missteps as scientists and health officials did their best to update their knowledge and recommendations based on new findings and the progression of the pandemic. Nevertheless, the overarching effect was the scientist’s/doctor’s recommendations saved millions of lives, while the conspiracy theorist’s claims were entirely bogus and cost lives.

As we go, I will be relying on peer-reviewed studies (i.e., claims backed by data), but I wanted to ensure that I am citing studies that are truly representative of the literature. Thousands of studies have been published since the start of the outbreak, and there are a large number of small, low-quality studies (particularly from early in the pandemic). Therefore, I will be focusing largely on systematic reviews and meta-analyses, especially reviews and meta-analyses of randomized controlled trials. These studies are considered to be the highest form of scientific evidence, because, when done correctly, they systematically collect a large number of studies and pool their results to look for a consistent pattern. As I have explained before (here, here, and here), on any well-studied topic you will find lots of outliers, lots of low-quality studies, and lots of statistical noise. Systematic reviews and meta-analyses try to cut through that noise to look at the over-arching effect and avoid cherry-picking studies. If a result is real, you should see that consistently reflected in the highest quality studies.

Face Masks

Let’s start with the use of masks for helping to control the spread of COVID. I will acknowledge at the start that on this topic, more than any of the others, there was initially a lot of confusion and mixed messaging. It wasn’t initially clear if masks were effective, and shortages in supplies made many governments want to save masks for those who needed them most. However, after those initial growing pains, most government health agencies around the world endorsed the use of masks (especially N95s), with governments often instituting mask mandates. From a disease-prevention standpoint, that was the right call.

Multiple systematic reviews and meta-analyses have confirmed that wearing a mask helps to control the spread of COVID, ultimately reducing the number of cases, hospitalizations, and deaths (e.g., Ford et al. 2021; Baier et al. 2022; Hajmohammadi et al. 2023). Indeed, so many of these studies have been published that one research group put together a systematic review of 28 systematic reviews (SeyedAhmad et al. 2023), with the conclusion that masks are beneficial at controlling COVID. This is extremely compelling evidence. Nevertheless, simply listing a bunch of studies is clearly unsatisfactory, and each study had its own end points, populations, and caveats, so let’s look more closely.

First, it’s worth noting that not all masks are created equal. Several meta-analyses and systematic reviews have shown that N95 masks are best, followed by surgical masks, with more mixed results on the effectiveness of cloth masks (Lu et al. 2023; Wu et al. 2023; Zeilinger et al. 2023). This is an important factor to bear in mind both when evaluating studies and when making your own choices regarding masking.

Second, you may be wondering about the contexts of these studies. Some of the studies cited so far were, for example, on healthcare workers, and while they clearly show a benefit to wearing masks in that setting, it is fair to wonder if that also applies to other settings. While masking is more important for the protection of certain groups, there is ample evidence that is effective in many settings. Unsurprisingly, there is a strong protective effect among the elderly living in assisted care facilities (see this meta-analysis: Chen et al. 2024), but there also appear to be benefits among younger groups. For example, Viera 2024 found that 10 out of 14 studies on masks in school settings found a protective effect. It is also worth noting that 2 of the studies that did not find a benefit were rated as having a “serious risk of bias,” and in many cases it was not clear what sort of mask or face covering was being used.

Looking at the effects of masks for the public more generally becomes messier from an experimental design/control standpoint, but multiple studies have still found ways to tackle it. For example, Rader et al. 2021 used survey data from 378,207 people to estimate mask use across communities, which they then correlated with community transmission rates. From this, they concluded that a 10% increase in mask use was associated with increased transmission control (i.e., wearing a mask reduces the spread of COVID).

The most compelling evidence for a general community protection effect comes from studies of mask mandates. A systematic review of these studies found that 21 out of 21 studies documented “benefits in terms of reductions in either the incidence, hospitalization, or mortality, or a combination of these outcomes” (Ford et al. 2021). Let’s look closer at some of these studies.

In many cases, mask mandates were patchy, with some local areas enacting them, while others did not. This allows for comparisons of areas with and without those mandates (ideally while controlling for other differences between them). For example, Huang et al. (2022) took data from hundreds of counties across the USA that implemented mask mandates and paired them with nearby, similar counties that did not impose mandates. They then controlled for environmental and economic factors and compared the counties before and after the mandates. Prior to the mandates, counties that would and would not eventually implement mandates had similar case loads, that were increasing at similar rates, but after the mandates went into place, the groups diverged, with significantly lower case rates in the counties with masks. At the start of the mandates, both groups were experiencing ~14 new cases per day per 100,000 people, whereas 30 days after the mandate, counties without masks had climbed to ~20 new case/day/100,000 people, while counties with mask mandates were at ~14.5 cases/day/100,000 (i.e., with masks the rate remained largely stable, while without masks, it continued to climb). By 40 days, counties without mandates continued to have high case loads (~19 cases/day/100,000), while counties with mandates dropped to ~12 cases/day/100,000. That’s a pretty substantial difference and makes it clear that masks had an enormous protective effect.

Lyu and Wehby (2020) used a similar approach but at the state level (still within the USA). Once again, states with mask mandates enjoyed significant reductions in the spread of COVID relative to states without mandates. Indeed, they estimated that the mask mandates that were in place prevented 230,000–450,000 cases over a 22-day period. A similar study in Ontario Canada (Peng et al. 2024) found that in areas with mask mandates, between June and December 2020, mask mandates prevented 290,000 cases and 3,008 deaths, saving 610 million Canadian dollars. That’s a pretty substantial savings compared to the minuscule cost of masking (also see similar results for the state of Illinois from Castonguay et al. 2024).

I want to be clear here that I haven’t made any political assertions. I have simply shown the very large and robust body of data showing that wearing a mask helps prevent the spread of COVID. Mask mandates saved thousands of lives, and many more could have been saved if mandates had been universal. If you want to make the political argument that your freedom not to wear a mask is more important than saving those lives, you can do that, but you can’t claim that the mandates don’t work, because the data clearly shows that they do.

Fortunately, COVID numbers are low enough atm that masks probably aren’t necessary most of the time. However, flair ups and smaller outbreaks will continue to occur, and I’d encourage you to continue wearing a mask in those situations or any other time that you are at a high risk of exposure or spreading COVID (or other respiratory viruses for that matter).

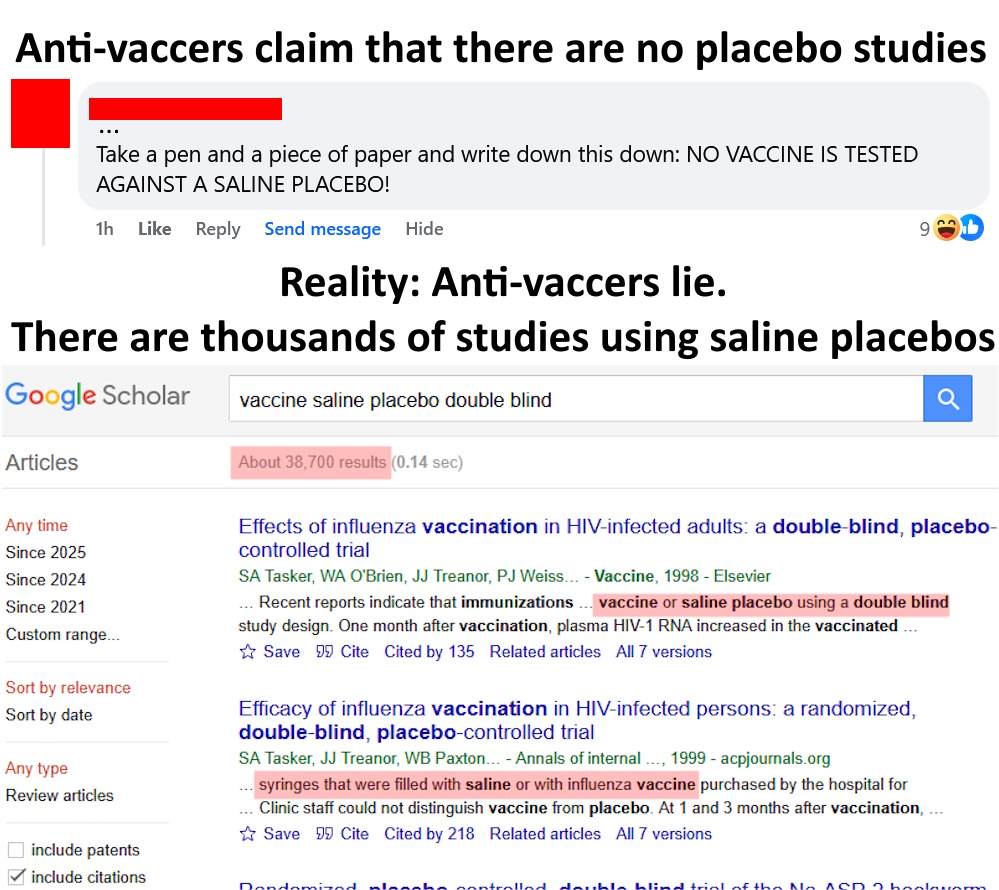

COVID Vaccines

Let’s now turn our attention to the big one: vaccines. At the start, we need to clear up a few misconceptions. First, as explained here (see #10), the COVID vaccines were carefully tested prior to releasing them to the public. It is simply not true the vaccines were rushed or that scientists were pushing the vaccines before knowing if they were safe and effective. That said, now that billions of doses have been delivered, we have been able to conduct massive follow-up studies to further ensure that the original results were correct.

Second, many people seem to be under the impression that the vaccines didn’t work because they did not immediately, completely, and entirely stop the spread of COVID. I frequently see people post things like, “they lied when they said the vaccine would stop the spread of COVID.” In reality, no vaccine is 100% effective. The expectation was never that it would fully stop COVID in all cases. Rather, the expectations were that it would greatly reduce the spread of COVID and reduce the severity of infections when they did occur (both of which were correct). Additionally, the effectiveness of a vaccine at controlling the spread of a disease is always dependent on the percent of the population that receives the vaccine (i.e., herd immunity; see Coccia 2021). So, by refusing the vaccine, people were creating a self-fulfilling prophecy in which they reduced the overall effectiveness of the vaccine at preventing community transmission.

With all of that said, the COVID vaccines were still an enormous success and, as we’ll see, they have saved millions of lives.

As a caveat at the start, it is worth stating that many different COVID vaccines have been developed, and they vary somewhat in their effectiveness and safety; however, the overarching picture is that they are highly safe and effective (see this large comparison of vaccine brands: Toubasi et al. 2022), so in many cases, studies considered multiple vaccine brands together to look at the overall success of vaccination campaigns (usually pooled by vaccine type [e.g., mRNA]).

Let’s now look at the safety of these vaccines. All vaccines (and all medications) have a risk of side effects. This is nothing new to COVID vaccines. However, you have to remember that not vaccinating also has risks. In the case of COVID vaccines, studies have repeatedly found that most adverse reactions are mild, and serious complications are extremely rare. For example, in a large meta-analysis comparing multiple vaccine types, Kouhpaye and Ansari (2022) found that the most common adverse reactions were fever, fatigue, headache, pain, redness, and swelling and concluded that, “At the present moment the benefits of all types of vaccines approved by WHO, still outweigh the risks of them and vaccination if available is highly recommended.” Numerous other systematic reviews and meta-analysis have found similar results, with very few serious side effects (Amanzio et al. 2022; Haas et al. 2022; Bello et al. 2023). These results persist even if we look at meta-analyses on subsets of the population like the elderly (Xu et al. 2023). Likewise, a meta-analysis on children aged 5-11 found that while there were minor side effects, there was “no increased risk of serious adverse events” (Piechotta et al. 2023).

Nevertheless, rare though they may be, serious side effects do occasionally occur. As with most vaccines, an allergic reaction is the most common serious side effect, but when looking across 15 studies with a total of 735,515 participants, Bello et al. (20223) only found 43 cases of anaphylaxis, none of which resulted in death. Indeed, in all the papers I have read, I have yet to encounter any reported deaths where the evidence compellingly showed that the COVID vaccine was the cause (see Lamptey 2021). It is a total myth that thousands of people died from the COVID vaccines.

What about myocarditis? This is the adverse effect that probably got the most attention. There is roughly a 2x increase in your risk of myocarditis following COVID vaccination (i.e., you are twice as likely to develop it; Juan Gao et al. 2023), but that is the relative risk (i.e.. how much your risk changes because of vaccination). To really understand the situation, we need to look at the absolute risk (i.e., how likely you actually are to develop myocarditis). A large increase in relative risk can still be a small increase in absolute risk. In the case of myocarditis, without vaccination (or COVID infection), the risk of myocarditis is 0.8–16.7 cases per 1 million people over a 30-day period. In other words, without COVID vaccination or infection, there is a 0.00008–0.00167% risk of someone developing myocarditis over a 30-day period. It’s a very small chance. Now, with the vaccine, that risk roughly doubles to 1.6–34.2 cases per million people, or 0.00016–0.00342%, which is still an incredibly small risk (numbers are from this meta-analysis: Alami et al. 2023). So yes, the risk increases, but your odds of developing myocarditis are still very, very low.

Additionally, we have to consider the fact that infection with COVID also increases your risk of myocarditis. Indeed, an actual COVID infection causes a much greater increase in risk than the vaccine causes. A massive study with millions of patients found excess rates (relative to background levels) of 1–6 in the first 28 days after the first vaccine does and 10 in the first 28 days after the second vaccine dose, compared to 40 in the first 28 days following COVID infection (Patone et al. 2022). Likewise, another large meta-analysis found that a COVID infection was 7x more likely to cause myocarditis than was a COVID vaccine (Voleti et al. 2022). So, you have to look not only at the risk from the vaccine, but also the increased risk of COVID and myocarditis without the vaccine.

Now that we have looked at the risks, let’s turn our attention to the benefits, which are enormous. Meta-analysis after meta-analysis after meta-analysis shows that the COVID vaccines substantially reduce community transmission (i.e., reduce infection rates), reduce the severity of infections, reduce hospitalizations, and reduce deaths (Huang and Kuan 2022; Rahmani et al. 2022; Zheng et al. 2022; Wu et al. 2023).

Other scientists have gone beyond simply reporting the differences in rates between the vaccinated and unvaccinated and have used those numbers, along with population sizes, vaccination rates, and infection rates to calculate the number of lives saved by the COVID vaccines. Using this approach, Watson et al. (2022) estimated that from 8 December 2020 to 8 December 2021, across 185 countries, the COVID vaccines saved 14.4–19.8 million lives! So to anyone who thinks the vaccines were “rushed” or were being “forced onto the public” this is why we didn’t wait longer to release them and why scientists, doctors, and health agencies were campaigning so hard for people to vaccinate. No one was “weaponizing science.” Rather, scientists used the best evidence available to make a correct judgement call that saved millions of lives, and even more lives could have been saved if more people had taken the vaccine.

To be fair, not all analyses have come up with the same number of lives saved, but the number is still always in the millions. For example, Mesle et al. (2024) looked at lives saved only in European Union countries between December 2020 and March 2023 and concluded that COVID vaccines had saved 1.6 million lives in EU countries, which is a huge benefit, particularly when the risks associated with the vaccines are so small.

Finally, as scientists have been saying all along, the benefits aren’t just reduced infections and reduced deaths. Even when someone with the vaccine becomes infected, the infection tends to be milder. Several systematic reviews and meta-analyses have looked at this in the context of “long-COVID,” with the consistent finding that those who were vaccinated before getting a COVID infection are less likely to have persistent “long-COVID” symptoms compared to people who became infected without first receiving the vaccine (Notarte 2022; Watanabe et al. 2023).

Likewise, a huge meta-analysis of over 24 million people found the following (Ikeokwu et al. 2023; my emphasis):

Being unvaccinated had a significant association with severe clinical outcomes in patients infected with COVID-19. Unvaccinated individuals were 2.36 times more likely to be infected, with a 95% CI ranging from 1.13 to 4.94 (p = 0.02). Unvaccinated subjects with COVID-19 infection were 6.93 times more likely to be admitted to the ICU than their vaccinated counterparts, with a 95% CI ranging from 3.57 to 13.46 (p < 0.0001). The hospitalization rate was 3.37 higher among the unvaccinated compared to those vaccinated, with a 95% CI ranging from 1.92 to 5.93 (p < 0.0001). In addition, patients with COVID-19 infection who are unvaccinated were 6.44 times more likely to be mechanically ventilated than those vaccinated, with a 95% CI ranging from 3.13 to 13.23 (p < 0.0001). Overall, our study revealed that vaccination against COVID-19 disease is beneficial and effective in mitigating the spread of the infection and associated clinical outcomes.

In summary, the data clearly vindicate scientists and health officials and show that COVID vaccines were an enormous success. Releasing the vaccines saved millions of lives and prevented countless infections and hospitalizations. Further, the vaccines have proven themselves to be highly safe, and none of the doomsday predictions from conspiracy theorists and anti-vaccers have come true. With billions of doses given, it would be really obvious if these vaccines were actually dangerous. Hospitals would be filled with people dying from the vaccines. That isn’t happening, because the vaccines are very safe.

This continues to be relevant today. COVID has mercifully shifted to an endemic stage (thanks in part to vaccines), but it will continue to be a threat for the foreseeable future, and staying up to date with your COVID vaccination status continues to be a great way to protect yourself and those around you. Further, misinformation about the COVID vaccines seems to be eroding people’s trust in vaccines more generally. Earlier this week, for example, the USA had its first measles death in a decade. The unfortunate child was not vaccinated and was in an area with low vaccine coverage. Their death is a direct consequence of science denial.

Ivermectin and Hydroxychloroquine

Now that we’ve looked at two extremely successful interventions, let’s look at to two major failures: ivermectin and hydroxychloroquine. I’m going to keep this section nice and short: neither of them works at preventing or treating COVID. Both drugs received extensive testing (thanks to their promotion by certain politicians and political pundits), and they both failed that testing. Here, for example, are multiple meta-analyses and systematic reviews on ivermectin that failed to find a benefit (Deng et al. 2021a; Roman et al. 2021; Marcolino et al. 2022; Shafiee et al. 2022). Hydroxychloroquine is the same story, with numerous systematic reviews and meta-analyses finding that it is not effective at treating COVID (Kashour et al. 2020; Deng et al. 2021b; Mitja et al. 2022; Hong 2023; Lucchetta et al. 2023; Kaushik et al. 2024). It’s also worth mentioning that you can find studies looking at different doses, age groups, severity, etc. and they all tell the same story.

Further, these drugs are not without risks. Ivermectin is usually well tolerated with low side effects, but it still has side effects (Roman et al. 2021). Hydroxychloroquine appears worse, with far more studies reporting side effects (Izcovich et al. 2022; Hong 2023; Kaushik et al. 2024). To be clear, most of the side effects are fairly mild (e.g., nausea and diarrhea), but given a lack of benefit, the risk assessment clearly does not come out in its favor.

At this point, it is important to acknowledge that if you dig around, you can find some studies that disagree and do report benefits of either ivermectin or hydroxychloroquine, but there are multiple critical caveats, and it is important to understand how to assess the scientific literature. First, there were several really bad studies early on which were later retracted, but some reviews/meta-analyses included those studies, which massively biased the results. Second, even in the more reliable studies, the effect size is generally small, the statistics are weak, and the result is inconsistent. For example, Song et al. (2024) found that ivermectin did not reduce mortality or the time until a PCR negative test, but there was a slight reduction in the odds of someone needing to be on a ventilator. To explain what I mean by “slight” for these sorts of relative risk assessments, 1 = no effect, <1 = reduced risk, >1 = increased risk, and for something to be statistically significant, we usually want the 95% confidence intervals to exclude the number 1 (i.e., we are 95% confident that the true result is either less than or greater than 1). In Song et al., the effect for ventilation was 0.67 with a 95% confidence of 0.47–0.96. So it technically cleared the bar for statistical significance (i.e., confidence interval did not include 1), but it is a really weak result with the confidence interval almost containing 1, and it is a result that other studies didn’t support. Similarly, when Garcia-Albeniz et al. (2022) looked at the ability of hydroxychloroquine at preventing COVID, they technically found an effect, but it was very weak: 0.72 with a 0.55–0.95 confidence interval. As a final example, Ramdani and Bouazza’s 2023 meta-analysis of low dose hydroxychloroquine for treating COVID found a slightly significant benefit for reducing mortality (0.73, confidence interval = 0.55–0.97), but failed to find a reduced risk of needing a ventilator or being admitted to the ICU. Further, even, the significant reduction in mortality disappeared when they limited their study to only include randomized controlled trials (the most robust type of trial).

This pattern of most studies failing to find an effect with a few scattered papers finding inconsistent, small, barely significant results that disappear depending on how you look at the data is exactly what we expect for something that does not work. I’ve explained this before in more detail here, but for any well studied topic, there is going to be statistical noise. There are going to be papers that disagree and statistical outliers, but, when a treatment actually works, we expect a strong agreement that it works (a consistent body of evidence) with a large, reliable effect size, and a few dissenting papers that usually have small sample sizes, biases, or other methodological issues. In contrast, when something doesn’t work, we expect most studies to find no effect, with a mixture of small effect sizes and low significance, especially when looking at lower quality studies. Note that the expectation is never 100% agreement.

We can see these two predictions play out nicely in the topics of this post. Look at the difference in the evidence base for masks and vaccines compared to ivermectin and hydroxychloroquine. Sure, you can find a few studies that disagree on masks and vaccines, but the overarching effect is strong agreement among the highest quality studies that there is a substantial and consistent benefit. In contrast, on ivermectin and hydroxychloroquine, sure you can find a few weak studies that suggest they might have a benefit, but the overarching picture is that the majority of studies show that they don’t work, and the studies that say they do have such small effect sizes and/or marginal significance that they are not compelling. Stated another way, what this body of evidence shows is that if there is a benefit to ivermectin and hydroxychloroquine for treating COVID, it is very small and unreliable, which contrasts strongly with the large, reliable benefits we see for masks and vaccines.

Conclusions and significance

To sum all of this up, numerous large studies have vindicated scientist’s/doctor’s/health agencies’ decisions during COVID. Mask mandates and the COVID vaccines saved millions of lives, and refusing to implement them or delaying them would have cost lives. Many more lives could have been saved if more people had masked and been vaccinated. Conversely, ivermectin and hydroxychloroquine have failed to live up to their hype. It was wildly irresponsible of prominent politicians to promote them and claim, without evidence, that they were effective at dealing with COVID. I can’t help but wonder how many people needlessly died because they listened to politicians, not scientists, and as a result, relied on ivermectin or hydroxychloroquine instead of vaccines.

Beyond those deaths, just think about how much funding has been squandered studying ivermectin and hydroxychloroquine. There are hundreds of papers on them, easily having required millions if not billions of dollars and incalculable effort. How much better would we all be if that much effort and money had been invested in more promising treatments? Under normal circumstances, that is what would have happened. Scientists would have conducted some trials, found little or no evidence that they worked, and moved on. However, because it became a political issue with one side baselessly insisting that those treatments worked, scientists felt obligated to study them exhaustively and put way more effort into it than they would have otherwise.

The point is that, taken as a whole, we are clearly far better off relying on scientific studies than conspiracy theories, and listening to actual scientists and doctors than to politicians and pundits who think they know better than experts. Millions of lives were saved thanks to scientists, and countless more could have been saved if politicians backed scientists instead of attacking scientists/doctors and sowing fear, doubt, and conspiracy theories.

Related posts

Literature cited

- Alami et al. 2023. Risk of myocarditis and pericarditis in mRNA COVID-19-vaccinated and unvaccinated populations: a systematic review and meta-analysis. BMJ Open 13:e065687

- Amanzio et al. 2022. Adverse events of active and placebo groups in SARS-CoV-2 vaccine randomized trials: A systematic review. The Lancet Regional Health 12:100253

- Baier et al. 2022. Effectiveness of Mask-Wearing on Respiratory Illness Transmission in Community Settings: A Rapid Review. Disaster Medicine and Public Health Preparedness 17:e96

- Bello et al. 2023. Adverse Events Related to SARS-Cov-2 Vaccination: A Systematic Review and Meta-Analysis. Journal of Epidemiology and Public Health 8:284-297

- Castonguay et al. 2024. Estimated public health impact of concurrent mask mandate and vaccinate‑or‑test requirement in Illinois, October to December 2021. BMC Public Health 24:1013

- Chen et al. 2024. Universal masking during COVID-19 outbreaks in aged care settings: A systematic review and meta-analysis. Ageing Research Reviews 93”102138.

- Coccia 2021. Optimal levels of vaccination to reduce COVID-19 infected individuals and deaths: A global analysis. Environ Res 204:112314

- Deng et al. 2021 Efficacy and safety of ivermectin for the treatment of COVID-19: a systematic review and meta-analysis. QJM: An International Journal of Medicine 114:721–732

- Deng et al. 2021b. Efficacy of chloroquine and hydroxychloroquine for the treatment of hospitalized COVID-19 patients: a meta-analysis. Future Virol.

- Ford et al. 2021. Mask use in community settings in the context of COVID-19: A systematic review of ecological data. E Clinical Medicine 38:101024.

- Garcia-Albeniz et al. 2022. Systematic review and meta-analysis of randomized trials of hydroxychloroquine for the prevention of COVID-19. Eur. J. Epidemiol. 37:789-796

- Haas et al. 2022. Frequency of Adverse Events in the Placebo Arms of COVID-19 Vaccine Trials: A Systematic Review and Meta-analysis. JAMA Network Open 5:e2143955

- Hajmohammadi et al. 2023. Effectiveness of Using Face Masks and Personal Protective Equipment to Reducing the Spread of COVID 19: A Systematic Review and Meta Analysis of Case–Control Studies. Advanced Biomedical Research 12:36

- Hong 2023. Safety and efficacy of hydroxychloroquine as prophylactic against COVID-19 in healthcare workers: a meta-analysis of randomized clinical trials. BMJ Open 13:e065305

- Huang and Kuan 2022. Vaccination to reduce severe COVID-19 and mortality in COVID-19 patients: a systematic review and meta-analysis. European Review for Medical and Pharmacological Sciences 26: 1770-1776.

- Huang et al. 2022. The Effectiveness of Government Masking Mandates On COVID-19 County-Level Case Incidence Across The United States, 2020. Health Affairs 41: 445-453

- Ikeokwu et al. 2023. A Meta-Analysis To Ascertain the Effectiveness of COVID-19 Vaccines on Clinical Outcomes in Patients With COVID-19 Infection in North America. Cureus 15:e41053

- Izcovich et al. 2022. Adverse effects of remdesivir, hydroxychloroquine and lopinavir/ritonavir when used for COVID-19: systematic review and meta-analysis of randomised trials. BMJ Open 12:e048502

- Juan Gao et al. 2023. A Systematic Review and Meta-analysis of the Association Between SARS-CoV-2 Vaccination and Myocarditis or Pericarditis. American Jurnal of Preventative Medicine 64:275-284.

- Kashour et al. 2020. Efficacy of chloroquine or hydroxychloroquine in COVID-19 patients: a systematic review and meta-analysis. J Antimicrob Chemother

- Kaushik et al. 2024. Effect of Hydroxychloroquine and Azithromycin Combination Use in COVID-19 Patients – An Umbrella Review. Indian Journal of Community Medicine 49:22-27.

- Kouhpaye and Ansari 2022. Adverse events following COVID-19 vaccination: A systematic review and meta-analysis. Int. Immunopharmacol. 109:108906

- Lamptey 2021. Post-vaccination COVID-19 deaths: a review of available evidence and recommendations for the global population. Clin. Exp. Vaccine Res. 10:254-275

- Lu et al. 2023. Masking strategy to protect healthcare workers from COVID-19: An umbrella meta-analysis. Infection, Disease, and Health 28: 226-238.

- Lucchetta et al. 2023. Hydroxychloroquine for Non-Hospitalized COVID-19 Patients: A Systematic Review and Meta-Analysis of Randomized Clinical Trials. Arq. Bras. Cardiol. 12:e20220380

- Lyu and Wehby 2020. Community Use Of Face Masks And COVID-19: Evidence From A Natural Experiment Of State Mandates In The US. Health Affairs 39:1419-1425

- Marcolino et al. 2022. Systematic review and meta‑analysis of ivermectin for treatment of COVID‑19: evidence beyond the hype. BMC Infectious Diseases 22:639

- Mesle et al. 2024. Estimated number of lives directly saved by COVID-19 vaccination programmes in the WHO European Region from December, 2020, to March, 2023: a retrospective surveillance study. Lancet Respir. Med. 12:714-727

- Mitja et al. 2022. Hydroxychloroquine for treatment of non-hospitalized adults with COVID-19: A meta-analysis of individual participant data of randomized trials. Clin Transi Sci 2023:524-535

- Notarte 2022. Impact of COVID-19 vaccination on the risk of developing long-COVID and on existing long-COVID symptoms: A systematic review. eClinical Medicine 53: 101624

- Peng et al. 2024. Impact of community mask mandates on SARS-CoV-2 transmission in Ontario after adjustment for differential testing by age and sex. PNAS Nexus 3:1-8

- Piechotta et al. 2023. Safety and effectiveness of vaccines against COVID-19 in children aged 5–11 years: a systematic review and meta-analysis. Lancet Child Adolsc. Health 7:379-91.

- Patone et al. 2022. Risks of myocarditis, pericarditis, and cardiac arrhythmias associated with COVID-19 vaccination or SARS-CoV-2 infection. Nature Medicine 28: 410-422

- Rader et al. 2021. Mask-wearing and control of SARS-CoV-2 transmission in the USA: a cross-sectional study. The Lancet Digital Health3: e148-e157

- Rahmani et al. 2022. The effectiveness of COVID-19 vaccines in reducing the incidence, hospitalization, and mortality from COVID-19: A systematic review and meta-analysis. Frontiers in Public Health

- Ramdani and Bouazza’s 2023. Hydroxychloroquine and COVID-19 story: is the low-dose treatment the missing link? A comprehensive review and meta-analysis. Archives of Pharmacology 397:1181–1188.

- Roman et al. 2021. Ivermectin for the Treatment of Coronavirus Disease 2019: A Systematic Review and Meta-analysis of Randomized Controlled Trials. Clinical Infectious Diseases

- SeyedAhmad et al. 2023. The Effectiveness of Face Masks in Preventing COVID-19 Transmission: A Systematic Review. Infectious Disorders 23:19-29

- Shafiee et al. 2022. Ivermectin under scrutiny: a systematic review and meta-analysis of efficacy and possible sources of controversies in COVID-19 patients. 19:102

- Song et al. 2024. Ivermectin for treatment of COVID-19: A systematic review and meta-analysis. Cell 10:e27647

- Toubasi et al. 2022. Efficacy and safety of COVID‐19 vaccines: A network meta‐analysis. J. Evid. Based Med. 14:245-262

- Viera 2024. Effect of Face Mask on Lowering COVID-19 Incidence in School Settings: A Systematic Review. Journal of School Health 94: 878-888

- Voleti et al. 2022. Myocarditis in SARS-CoV-2 infection vs. COVID-19 vaccination: A systematic review and meta-analysis. Frontiers Cardiovascular Medicine 9

- Watanabe et al. 2023. Protective effect of COVID-19 vaccination against long COVID syndrome: A systematic review and meta-analysis. Vaccine 41:1783-1790.

- Watson et al. 2022. Global impact of the first year of COVID-19 vaccination: a mathematical modelling study. Lancet 22:1293-1302

- Wu et al. 2023. A systematic review and meta-analysis of the efficacy of N95 respirators and surgical masks for protection against COVID-19. Preventative Medicine Reports 36:102414

- Wu et al. 2023. Long-term effectiveness of COVID-19 vaccines against infections, hospitalisations, and mortality in adults: findings from a rapid living systematic evidence synthesis and meta-analysis up to December, 2022. The Lancet Respir. Med. 11:439-452

- Xu et al. 2023. A systematic review and meta-analysis of the effectiveness and safety of COVID-19 vaccination in older adults. Frontiers Immunology 14

- Zeilinger et al. 2023. Effectiveness of cloth face masks to prevent viral spread: a meta-analysis. Journal of Public Health 46:e84-e90.

- Zheng et al. 2022. Real-world effectiveness of COVID-19 vaccines: a literature review and meta-analysis. International Journal of Infectious Diseases 114:252-260.

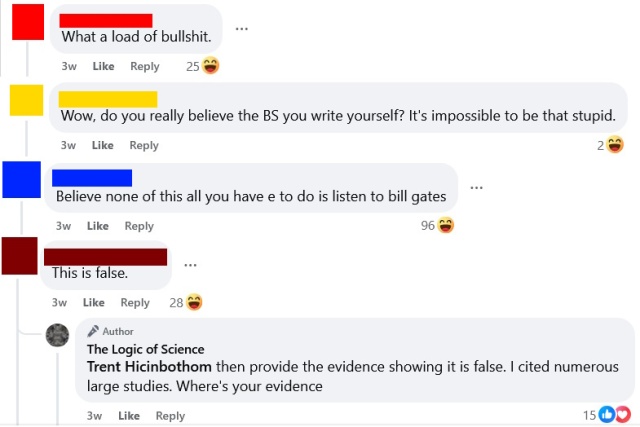

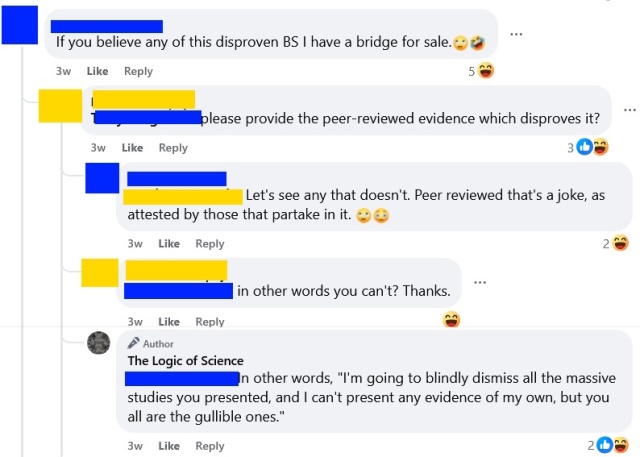

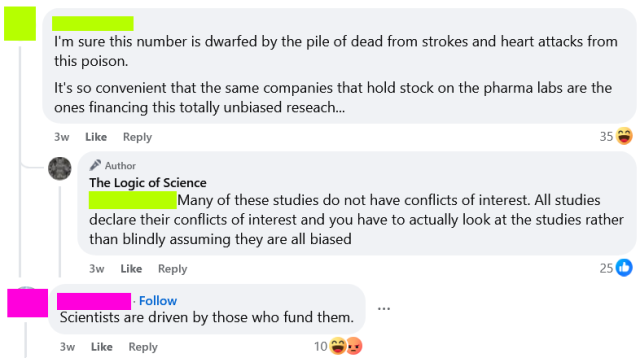

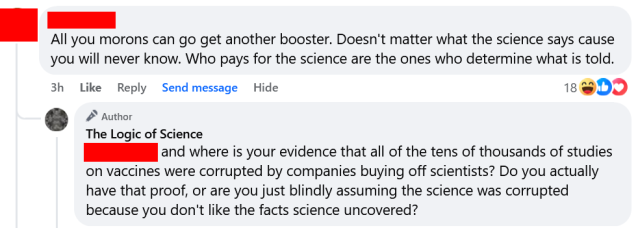

In recent conversations on this page, I have been struck by just how intellectually lazy science-deniers usually are. This is hardly a novel observation, but I think it bears discussion. I also want to note that this sort of lazy thinking is common in politics and countless other topics, and it is very easy to fall into these bad habits. Critical thinking is a skill, and like most skills, it requires practice. Being well-informed takes hard work. Blind adherence to biases and preconceptions is much easier than rigorous fact-checking and serious contemplation. We are all prone to cognitive biases, but if we want to have rational views based on evidence and logic, then we need to acknowledge those tendencies and fight against them. We need to be humble and acknowledge the limits of our personal knowledge and be intellectually diligent and honest. Blind denial of any information you don’t like is easy and seductive, but it is not rational or intellectually rigorous.

In recent conversations on this page, I have been struck by just how intellectually lazy science-deniers usually are. This is hardly a novel observation, but I think it bears discussion. I also want to note that this sort of lazy thinking is common in politics and countless other topics, and it is very easy to fall into these bad habits. Critical thinking is a skill, and like most skills, it requires practice. Being well-informed takes hard work. Blind adherence to biases and preconceptions is much easier than rigorous fact-checking and serious contemplation. We are all prone to cognitive biases, but if we want to have rational views based on evidence and logic, then we need to acknowledge those tendencies and fight against them. We need to be humble and acknowledge the limits of our personal knowledge and be intellectually diligent and honest. Blind denial of any information you don’t like is easy and seductive, but it is not rational or intellectually rigorous. The aforementioned

The aforementioned

I want to briefly discuss a logical fallacy that is surprisingly common, despite being so obviously absurd. I suspect that most people committing this fallacy do so without ever actually contemplating what they are saying, and it is my hope that discussing this fallacy will help people to both spot and avoid it.

I want to briefly discuss a logical fallacy that is surprisingly common, despite being so obviously absurd. I suspect that most people committing this fallacy do so without ever actually contemplating what they are saying, and it is my hope that discussing this fallacy will help people to both spot and avoid it.