Over and over again when discussing climate change, I encounter Americans who insist that there is no point in doing anything because China and India are the real problem. This claim takes various forms but generally includes claiming that America’s emissions are tiny compared to China’s and India’s and that there is no point in the USA doing anything because countries like China and India will never change. When you start looking at the numbers and actually examining the facts, however, this argument utterly falls apart. It is simply a copout excuse for not taking action. I wrote about this several years ago, but the numbers have shifted since then, so it is time for an update.

At the start, I want to make it clear that I am not trying to “vilify” America or claim that countries like China don’t play a substantial role in climate change. There is plenty of blame to go around, and while China is taking action (more on that later), there is a lot more that they can and should do. However, America also plays a huge, outsized role in climate change, and it is disingenuous and dangerous to blame others rather than taking responsibility for our actions. All countries need to work together to solve this problem, but some countries (like the USA) have contributed an outsized proportion of the world’s greenhouse gas emissions.

With that said, let me outline some core points:

- India produces far fewer emissions than the USA both in total and per capita. So, if you are claiming that India is worse than the USA, you are simply wrong on the facts.

- China does contribute more than the USA in terms of total greenhouse gas emissions, but that is a fairly recent development and China lags way behind the USA in terms of per capita emissions.

- China is building many new coal power plants and increasing their emissions, but they are also investing very heavily in renewable energy. So, the claim that they aren’t taking action is false.

- Even if none of the points above were true, that would not absolve Americans of their duty to take responsibility for their own actions. “Other people were doing it too” has never been a valid excuse for unethical behavior.

Data source: For this post, I will be using: Crippa M., Guizzardi D., Pagani F., Banja M., Muntean M., Schaaf, E., Quadrelli, R., Risquez Martin, A., Taghavi-Moharamli, P., Grassi, G., Rossi, S., Melo, J., Oom, D., Branco, A., Suarez Moreno, M., Sedano, F. San-Miguel, J., Manca, G., Pisoni, E., Pekar, F., GHG emissions of all world countries – JRC/IEA 2025 Report, Luxembourg, 2025, https://data.europa.eu/doi/10.2760/9816914

Note: In this post, I am talking specifically about greenhouse gas emissions. Other topics such as plastic pollution and air quality in cities (e.g., the gases that cause smog) are separate issues that are irrelevant to the discussion at hand.

Total emissions over time

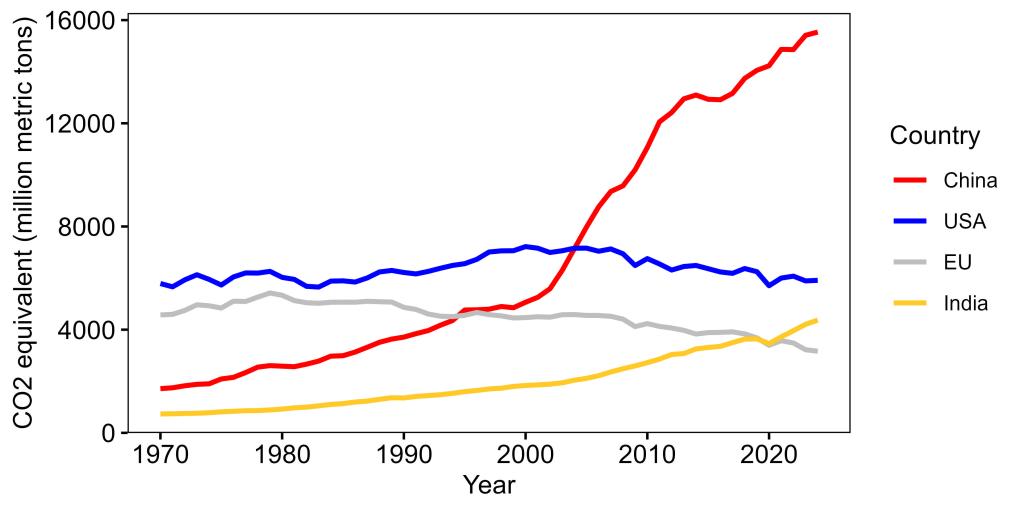

There are several ways we can look at the data, but let’s start by looking at total emissions over time. The three biggest emitters are China, the USA, and India, so I will focus on them while also including the countries currently in the European Union (EU) as a reference point (Figure 1). When we do that, several things become obvious.

First, China obviously has had a dramatic increase in greenhouse gas emissions. To that extent, there is some truth to the claim that they are having the biggest impact, but there are several other critical factors (like population size) that we have to take into account to get a full picture (more on that in a minute).

Second, shifting the blame from the USA to India makes no sense and is at odds with the facts. It is simply not true that India produces more greenhouse gases than the USA. Here again, India is admittedly increasing its greenhouse gas production, but as with China, there are other factors to consider (again, more in a minute).

Meanwhile, the USA has had a moderate emissions decrease since its peak in the 2000s, but it is still higher than its 1970 emissions level, whereas the EU has been consistently lower than the US, with a stronger decrease.

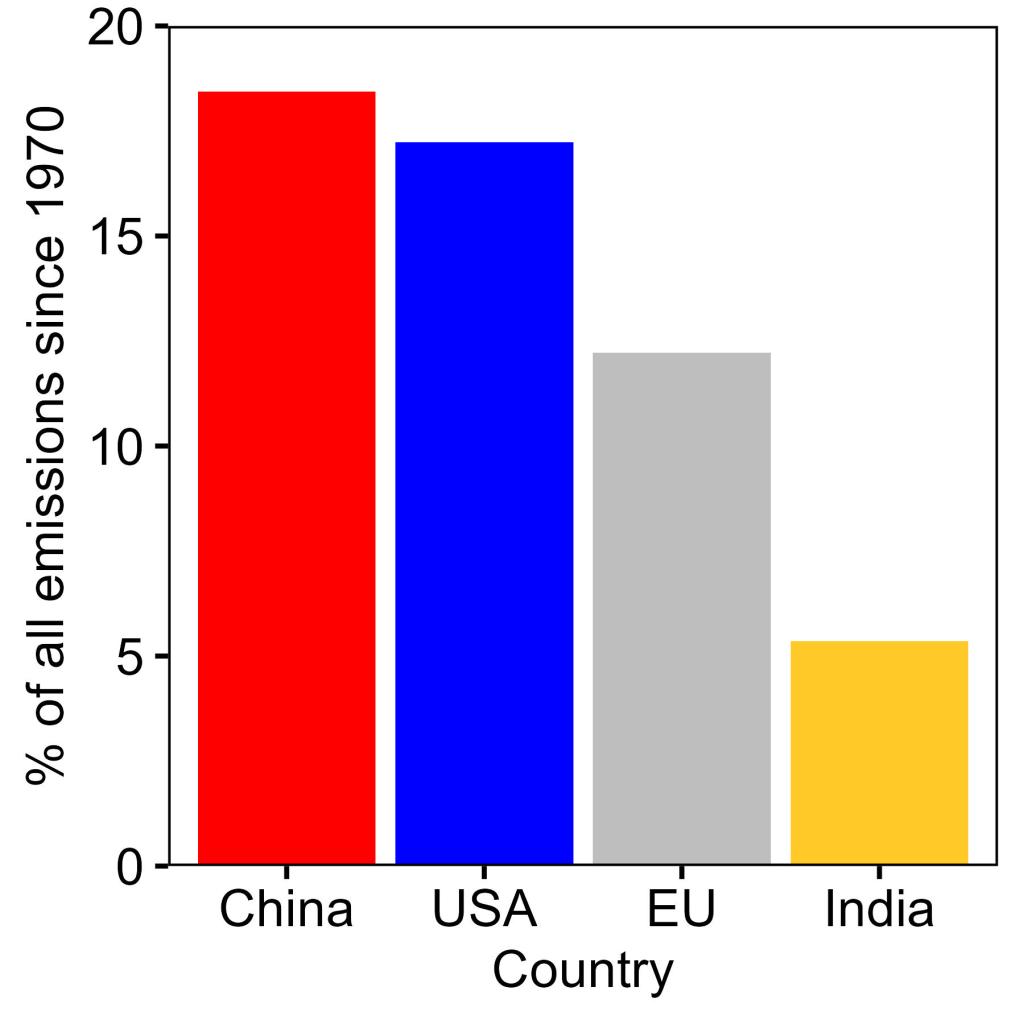

To really understand which countries have had the biggest role in climate change, however, we need to not simply look at the trends over time, but also at the total levels of contribution. So, let’s sum each country’s greenhouse gas emissions over time. When we do that (Figure 2), we find that India has actually only contributed 5.4% of the world’s total emissions. Meanwhile, China leads with 18.4% and the USA is only slightly behind at 17.2%. So, while China has produced more emissions than the USA, it is not even remotely true that they are the key polluter, and the USA is minuscule in comparison.

Emissions per capita

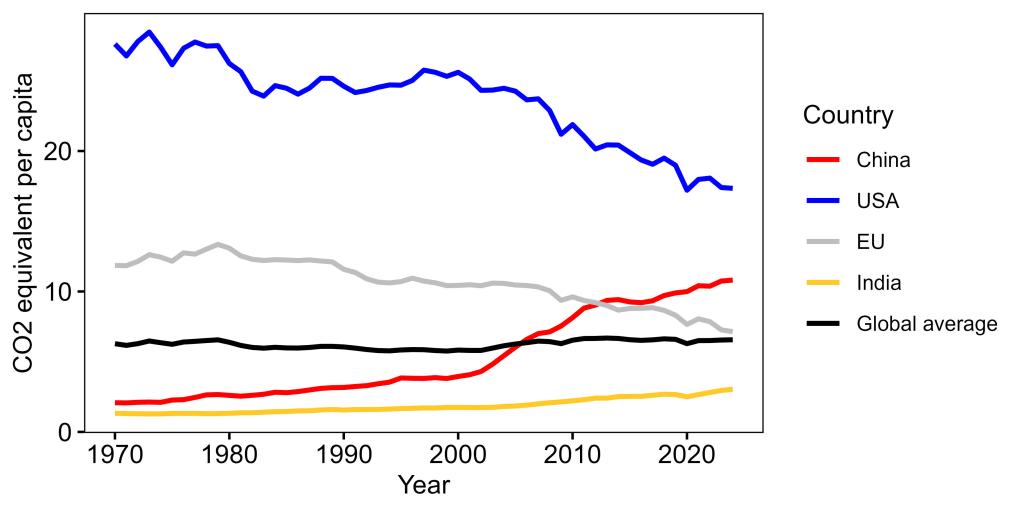

So far, we have only looked at totals, but to get a complete picture, we have to look at emissions per person. Obviously, a larger country will be expected to produce more emissions.

Put another way, if two countries had identical environmental laws and regulations, but one country was twice the size of the other, we’d obviously expect the larger country to produce more emissions, even though the environmental policies were the same. That’s just simple math.

So, when looking at these emissions, we also have to account for the fact the USA is smaller than the EU and much smaller than India or China.

Looking at emissions per capita paints a very different story (Figure 3). For reference, I have included a line showing the global total emissions per capita (all countries combined). Any country above that line is contributing more than their fair share to climate change, while any country under that line is contributing less than expected based on population size. Compared to that line, the USA is an egregious offender. The emissions per capita are, fortunately, declining, but they are consistently way above the global average. Meanwhile, India consistently sits way below the global average, and the EU has declined to the point that it is just barely above the global average. China is, unfortunately increasing, but it has only recently risen above the global average and still lags well below the USA. Again, China’s increase is a problem, I’m not saying that it isn’t, but trying to place all the blame on China while ignoring the USA’s massive role is dishonest.

To put this another way, the USA has 4.2% of the world’s population but has produced 17.2% of the world’s total greenhouse emissions (since 1970). Meanwhile, China has 17.0% of the world’s population and has contributed 18.4% of total emissions. India lags way, way behind, with 17.7% of the world’s population, but only 5.4% of the world’s total emissions.

Stated yet another way, as of 2024 (the last year for which I have data), an average American produced 1.6x as many greenhouse gas emissions as someone in China, 2.4x as many as someone in the EU, and 5.7x someone in India! So don’t tell me that China and India are the “real” problem.

Again, I’m not saying that America is the only country to blame, but it is undeniable that it is playing an outsized role relative to its population size and it is silly and dishonest to pretend that other countries are the real problem. Further, all of this is before we even get into details like many of China’s emissions resulting from the production of products that are shipped overseas to satiate America’s rampant consumerism.

China is investing in renewable energy

Finally, while it is true that China is building more coal power plants, they are also one of the world leaders in investing in renewable energy. In 2024, China invested $625 billion in renewable energy, representing 31% of the world’s total investment (again, keeping in mind that they have 17.0% of the world’s population, thus representing an outsized investment). Indeed, in 2024, 84% of their electricity demand growth was met by their investment in renewables.

Here again, I’m not arguing that China is a shining example. Obviously, they still have a long way to go, and China is a massive contributor to climate change. However, it is completely dishonest to pretend that they aren’t taking steps to curb their emissions or that they are the “real” problem.

Americans often seem to think that the USA is the only country investing in fighting climate change and everyone else is to blame, but the actual facts and numbers paint a completely different picture. Even with China’s increasing fossil fuel use, its per capita emissions are still much lower than the USA’s, and China is investing heavily in renewable energy. The USA has contributed and continues to contribute a disproportionate amount of fossil fuel emissions and has a very, very long way to go before it can point fingers at other countries.

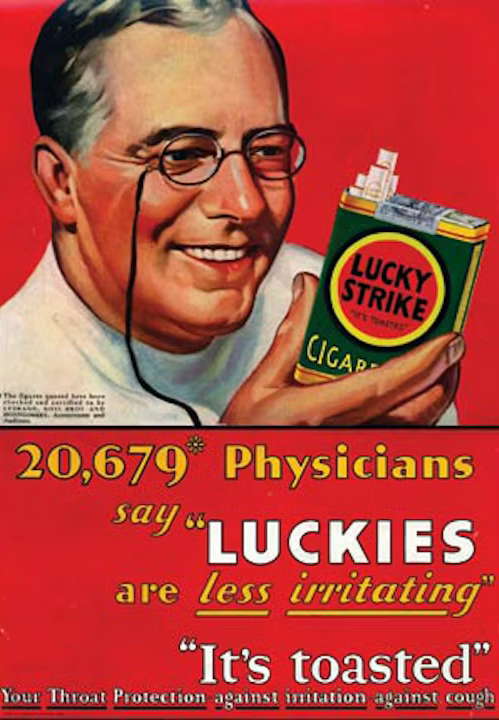

Science-deniers have a long history of blindly assuming that any research they don’t like must have been corrupted by “big whatever,” and I constantly see people

Science-deniers have a long history of blindly assuming that any research they don’t like must have been corrupted by “big whatever,” and I constantly see people  The “backfire effect” is a psychological phenomenon in which correcting misinformation actually reinforces the false view rather than causing someone to reject it (

The “backfire effect” is a psychological phenomenon in which correcting misinformation actually reinforces the false view rather than causing someone to reject it ( I don’t know how to tell you this, but companies lie and mislead.

I don’t know how to tell you this, but companies lie and mislead. The evidence for anthropogenic climate change is overwhelming, but the inherent complexity of the climate can make it difficult to communicate the science to the public. The basic concept is simple enough (CO2 traps heat, we have increased the CO2 in the atmosphere, therefore more heat is being trapped), but the details quickly get convoluted and conversations get bogged down in details of climate models, forcings, feedback loops, etc. So in the post, I want to talk about a really key piece of evidence that is, in my opinion, very straightforward and easy to understand and also extremely compelling. Namely, the results of satellites measuring heat leaving earth.

The evidence for anthropogenic climate change is overwhelming, but the inherent complexity of the climate can make it difficult to communicate the science to the public. The basic concept is simple enough (CO2 traps heat, we have increased the CO2 in the atmosphere, therefore more heat is being trapped), but the details quickly get convoluted and conversations get bogged down in details of climate models, forcings, feedback loops, etc. So in the post, I want to talk about a really key piece of evidence that is, in my opinion, very straightforward and easy to understand and also extremely compelling. Namely, the results of satellites measuring heat leaving earth.