“Placebo effect” is a term that almost everyone knows but few seem to understand. Misconceptions about placebo effects are rampant and usually center around the idea that a placebo effect occurs when you feel better because you thought a treatment would work. In reality, there are multiple types of placebo effects, many of which have nothing to do with whether or not you expect a treatment to work.

Understanding this is important, because misconceptions about placebo effects lead to erroneous arguments and poor medical decisions. These misconceptions are commonly manifested in the argument that, “a placebo effect is still an effect.” This argument is used as a justification for the continued use of treatments that have failed scientific testing because, according to it, even if the treatment only produces a placebo effect, that effect is still beneficial. As we will see, however, this argument is oxymoronic and completely falls apart once you understand what placebo effects actually are.

Another common argument asserts that a treatment must actually work because benefits have been seen in young children and/or pets who can’t possibly have expected the treatment to work. Likewise, I often hear people make statements like, “well I didn’t think it would work, but I still got better, so it can’t have been a placebo effect.” Both of these arguments are, again, based on the misconception that placebo effects simply mean getting better because you think you will get better. As we will see, the reality of the situation is far more complicated.

What are placebo effects?

You may have noticed that I keep saying “placebo effects” (plural). I’m doing that because the “placebo effect” is actually a collection of lots of different factors that we shove into a single category for convenience. To borrow a definition from Science-Based Medicine,

“A placebo effect is any health effect measured after an intervention that is something other than a physiological response to a biologically active treatment.”

In other words, “placebo effect” is a broad, catch-all term for any measured change in a patient that is caused by something other than an actual, biological effect of the treatment. This is an inherently wide definition, and there are lots of different types of placebo effects that contribute to that change.

Let me elaborate with an example. Suppose I think that diseases are caused by an electrical imbalance and shocking yourself with an electric current will cure you. So, I get people who are sick or in pain to come to me, I zap them, pronounce them healed, and they go on their way. Within a few days, many notice that they are feeling much better. Some may even find that real doctors run tests and conclude that their condition has improved.

Did my treatment work? Maybe, but we can’t actually conclude anything from those anecdotes because there are other possible explanations for that result. Let’s tally up some of those possibilities:

- Biased reporting (i.e., people who feel better are more likely to go online and post about their miracle cures)

- Spontaneous remission that has nothing to do with the treatment

- People sought treatment when their symptoms were at their worst and, as a result, they would have felt better in a few days regardless (i.e., regression to the mean)

- They made some other change in lifestyle, diet, work, etc. that caused the improvement

- There were measurement errors or misdiagnoses by the doctors during the initial visit or the follow up

- They feel better because they think they are supposed to feel better (the classic placebo effect most people think of)

This is why anecdotes simply are not valid evidence of causation. If we actually want to know whether electrocuting someone (or any other treatment) works, we have to collect a large group of people, randomly assign half of them to receive the treatment while another half receives a placebo (without either group or, ideally, the doctors knowing who is in which group), and control for factors like age, sex, other health conditions, and other medications. When we do that, we will probably still find that there is a change in our placebo group over time. That change will be caused by some combination of factors like the ones listed above. Some people might feel better simply because they thought they should feel better. Others may have improved because of some other change. Others may have sought treatment when their symptoms were at their worse so they would have felt better over time regardless, etc.

All of those things are types of placebo effects, the sum of which gives the total placebo effect in that experiment. It is the background change in patients that has nothing whatsoever to do with an actual biological response to the treatment being tested. So, for the treatment to be effective, it must, by definition, produce an effect greater than the placebo effect. This why we do placebo controlled trials: to tell us whether the recovery rate from the treatment is greater than the background recovery rate without the treatment.

Do you see why that automatically makes statements like, “a placebo effect is still an effect” utter nonsense? The placebo “effect” is an experimental construct. It’s just a measure of the background noise in the system so that we can tell whether or not a treatment actually works. It is madness to try to claim that the background noise in the system is a legitimate therapy!

Regression to the mean

In case I haven’t made my point entirely clear, I’m going to focus for a minute on one of the most common types of placebo effects: regression to the mean. That is a fancy term that basically just means that things usually return to their normal state over time even without intervention (think of it as “return to the average”).

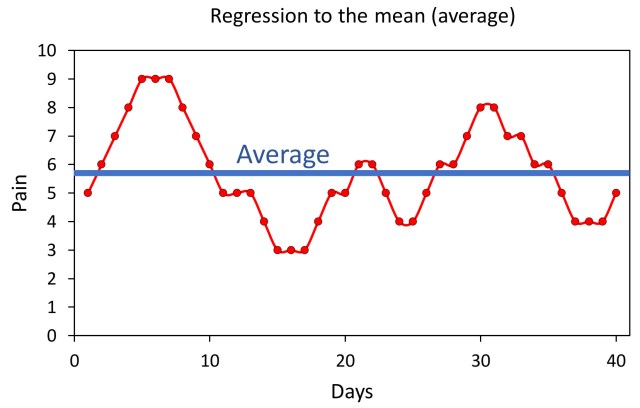

Chronic pain provides a good example of this (see figure). People with chronic conditions typically have good days and bad days. There are days where they are in lots of pain, and those days eventually give way to days with less pain. If we plot the pain over time, we get a wave-like graph with pain oscillating around an average value. Thus, if you start at any given point on the graph and wait long enough, it will eventually go back to the average value (i.e., it regresses to the mean). Critically, times with the worst pain are, by definition, followed by times with less pain. In other words, anything you do when the pain is at its worst will inherently eventually be followed by days with less pain.

This is where things become important for understanding placebos (and anecdotes). People are much more likely to seek treatment (including trying unconventional treatments or enrolling in a clinical trial) when they are experiencing the worst symptoms, but, as we’ve just established, they would have felt better several days or weeks later regardless of the treatment simply because of regression to the mean!

Chronic pain provides a good illustration of regression to the mean. People with chronic conditions tend to have some good days and some bad days that oscillate around an average (mean) value. Thus, from any given point on the graph, if you wait long enough, the condition will eventually return (regress) to the average value.

Look at the figure for a second and imagine you are the person on day seven. You’ve had a really rough week of severe pain. You’re now desperate enough to try anything, even an alternative treatment of which you are skeptical. So, you try my electric shock therapy, or acupuncture, or homeopathy, or anything else you can think of to relieve your suffering, and, by the next day, like a miracle, you are already feeling better. A few days later, you’re feeling better than you’ve felt in a long time. You might, naturally, conclude that the treatment actually worked. At a quick glance that seems like a perfectly reasonable conclusion, and it’s totally understandable that so many people fall for it, but as you can see in the graph, the treatment didn’t actually work! The condition simply regressed to the mean, and you would have improved even without it.

Conversely, people who are currently feeling good and are at the low points in the waves are much less likely to seek unconventional treatments. If they did, regression to the mean would often make it appear that the treatments made things worse. This disparity in when people are the most likely to seek treatment creates a strong bias towards treatments “working” in both anecdotes and clinical studies, and it is one of the many reasons why it is so critical to run placebo-controlled trials so that we can measure those background changes and test whether the treatment is producing a real improvement.

The common cold provides another excellent example. Countless times I’ve heard people insist that some quack treatment cures colds because they had a really bad cold, and nothing was helping, then they took this treatment, and in a few days, they felt way better. Well, of course they felt better in a few days; that’s how long colds last! Also, if they’d already been suffering for several days, then they were probably at the tail end of it anyway, so even a fairly rapid recovery after the quack treatment isn’t surprising. It is easily explainable once you understand regression to the mean.

My point here is two-fold. First, notice that regression to the mean has utterly nothing whatsoever to do with either getting better because you think you should get better or with an actual effect of the treatment. It is literally just what would happen if you did nothing. This is why arguing that a treatment is valuable even if it is “just a placebo” is madness. When something is studied and found to be no better than a placebo, things like regression to the mean are a part of that placebo effect being measured, and they are clearly not valid therapies.

Second, regression to the mean is responsible for a lot of anecdotes. People frequently tell me with great conviction about how they had suffered for a long time and nothing had worked until they tried X. They often say that they didn’t think X would work, but they became so desperate that they tried it anyway, and afterwards they felt better! As you can hopefully now see, that sort of situation is to be expected from regression to the mean. If people seek treatment when things are at their worse, there is no place to go but better.

To be clear, this doesn’t automatically mean that those treatments don’t work. Rather my point is simply that the anecdotes are not valid evidence that they do work. Science is all about eliminating possibilities so that you can be confident in the conclusion. We have to conduct properly controlled trials to actually test the treatments, and if the treatments fail those tests, we can then be confident that the anecdotes are from factors like regression to the mean, rather than from the treatment actually working.

Pets, children, and unbelievers

At this point, I want to directly address the arguments that, “it can’t have been a placebo effect because it worked on animals/children/someone who didn’t think it would work.”

I’ve described several types of placebo effects throughout this that have nothing to do with belief or a conscious awareness of what is going on (e.g., regression to the mean), and those are already sufficient to deal with these arguments, but for thoroughness, I want to bring up a few additional points.

The first is something known as placebo by proxy. Children and many animals (e.g., cats and dogs) are perceptive. The mood of people around them affects them, and those effects can go on to affect the outcomes of their treatment. So, if you take your dog or child to receive acupuncture (which is just a placebo btw), your dog or toddler might not expect it to work, but you do, and, as a result, your mood is likely to improve because you think your pet/child is receiving a valuable treatment. The fact that you seem more at ease and less worried makes your pet/child more at ease, which improves their symptoms. Again, to be clear, the acupuncture (or whatever treatment it was) did nothing. It was entirely your response that caused the apparent improvement.

A related problem arises because improvements in pets and children are often self-reported by the owners/parents. So, if you think the treatment works, you’re more likely to see an improvement that isn’t really there. That is human nature. If you think acupuncture works (for example), you are pretty likely to think your dog is limping less after receiving it simply because that is the result you expected to see. Our brains are pattern recognition machines, and while that serves us very well in some cases, it also makes us very prone to biases. This sort of bias in the reporting of outcomes is yet another type of placebo effect, and, again, it has utterly nothing to do with an actual improvement in the patient.

The point is simply that placebo effects absolutely can be at play for children, pets, and skeptics; so the fact that an anecdote relates to them does not make the anecdote reliable evidence of causation, nor does it mean that the observed result wasn’t a placebo effect.

It’s not “mind over matter,” and it’s not effective

As I bring this post to a close, I want to stress that none of these placebo effects are situations of “mind over matter.” They are not situations where people are actually getting better because of the placebo. I’ve been largely focusing on placebo effects that aren’t related to the patient expecting to get better, but I should explicitly state that those effects do exist as well. A patient that thinks they are receiving a useful treatment is more likely to report a reduction in some subjective symptoms, such as pain, but even in that subset of placebo effects, emphasis has to be placed on the word “symptoms.” They are not actually getting better; their brain is simply playing a trick on them to make them feel better. So no underlying condition has actually been treated (which is pretty ironic given how often the same people who tout placebo effects like to erroneously claim that “modern medicine doesn’t treat the underlying causes of illness”).

All of this makes it absurd to argue that a treatment is still beneficial even if it is “just a placebo.” As you can hopefully now see, that is a hollow argument. Placebo effects are not actual improvements. They are the background changes that are not caused by a biologically active treatment. They are, by definition, a lack of effect of the thing being tested. Indeed, for some types of placebo effects (such as regression to the mean) they are literally what would happen if you did nothing! So, passing off quack treatments as actual therapies in order to “elicit” a placebo effect is dangerous and unethical.

Further, even if you want to narrowly focus on the subset of effects that result in patients feeling better because they think they should feel better, it should be noted that real treatments can also generate that transient perception of an improvement. So why on earth should we recommend a quack treatment when we can recommend a real one?

Also, on that note, there are certainly things that can and should be done to increase the efficacy of real medicine. We know that things like good patient-doctor relationships improve how patients feel. So absolutely we should work on things like that in tandem with science-based medicine (indeed that is part of science-based medicine), but it absolutely does not mean that we should use discredited treatments and chase magic and wishful thinking in the vain hopes of evoking a placebo effect.

Note: Please read this post and the systematic reviews and analyses discussed therein before claiming that acupuncture actually works.

Related posts

- 5 reasons why anecdotes are totally worthless

- Acupuncture is just a placebo

- If anecdotes are evidence, why aren’t you drinking paint thinner?

- Naturopaths use deceptive tactics to support pseudoscience

- Is it likely that alternative medicine works? The importance of prior probability

- Most scientific studies are wrong, but that doesn’t mean what you think it means