One of the most common unifying themes of the anti-science movement is the notion that large corporations and governments are concealing the truth for the sake of monetary gain. These conspiracy theories pervade blogs against GMOs, vaccines, modern medicine, global warming, etc., and they have led to the common trope, “follow the money.” This is a challenge that I repeatedly see anti-scientists make, and the idea is that if we simply follow the money trail, we will find that climate scientists are being bought off, the food supply is being controlled by the evil overlords at Monsanto, vaccine researchers are being paid by pharmaceutical companies, etc. This challenge is designed to quickly silence all opposition by establishing that all opposing research is biased and the scientists are only in it for the money.

There are, however, several obvious problems with this challenge. First, it is an ad hominem fallacy. For example, the fact that a vaccine researcher works for a pharmaceutical company does not automatically mean that he/she fudges their results. This leads to the second problem: for this argument to work, you need to show that researchers are actually being paid to be dishonest, not simply that their job is doing research. This places the burden of proof on the person telling you to “follow the money.” In other words, they must provide evidence of widespread corruption and dishonesty to support their claim. Finally, this challenge is applied inconsistently, and the people who issue it completely ignore the fact that many of their “experts” have financial conflicts of interest.

So ultimately, this challenge is a bad one because it has a logical fallacy as its core and places the onus on the person issuing the challenge. We have to make decisions based on facts, not based on the people who produced those facts. Nevertheless, for sake of argument, I want to accept this challenge. I am going to “follow the money” on global warming, GMOs, and vaccines, and I am going to show that if we accept this illegitimate challenge, it actually ends very badly for the anti-scientists. In other words, I intend to beat them at their own game (note: this post is long, so you can follow the hyperlinks to the different sections).

Global Warming

When following the money, it’s important to look at what each group stands to gain from their position (this is a task that anti-scientists tend to utterly fail at). Let’s start with the scientists who think that we are causing the climate to change. Most of the climate deniers that I have talked to think that these scientists are either directly getting paid off or they are just going along with it to get grant money. I’ve talked about the problems with the grant argument before, but, since I have agreed to play by the anti-scientists’ rules, I will overlook those problems for the time being and say (for sake of argument only) that it’s a plausible claim. Nevertheless, there is still a substantial problem with this line of reasoning. Namely, where is the money coming from?

Ultimately, if we trace it back far enough, the money for most major grants originates with the government. Indeed, many of the climate change deniers that I know think that corrupt politicians are behind this supposed hoax, but this raises an important question, “why would politicians fake climate change?” There’s no obvious answer to this quandary. At least in the US, climate change has generally been an unpopular political position. So saying that they are faking it to get votes is just silly. Therefore, most people say that they created this hoax to get money. The problem here should be obvious: most politicians don’t get any money for supporting actions to prevent climate change. Yes, climate change initiatives do often involve taxes, but that tax money doesn’t go straight into the politicians pockets. Further, let’s not forget that the government gives numerous tax breaks for installing renewable energy sources. Also, keep in mind that we began the journey down this money trail with the government giving billions of dollars to researcher’s to study climate change. How exactly are the politicians gaining money from taxes while simultaneously spending billions on climate research? Additionally, one of the most common arguments against trying to prevent climate change is that it will cost the government money and destroy the economy. I’ve talked to people who will in a single breath tell me that taking action against climate change will bankrupt the government and the government is paying off scientists in order to make money. These two views are clearly incompatible with one another. Finally, we always need to keep in mind that it’s not just the US. Almost every government in the world has acknowledged climate change and is supporting climate change research.

Given the complete lack of motive for politicians, some people instead say that environmental groups are the source of the funding. The most obvious problem with this is that most environmental groups are non-profits, which by definition means that they aren’t in it for the money. Further, environmental groups exist to deal with serious environmental problems, and there are plenty of real problems to take care of without inventing fictional ones. It makes absolutely no sense for these organizations to fake climate change when there are so many other problems to solve. They have nothing to gain from creating a fake crisis.

Now, having established a complete lack of incentive for starting this supposed conspiracy, I want to flip things around and look at the people who would benefit from denying climate change. This time, we have a very clear and obvious group that benefits enormously from denying global warming. I am, of course, referring to fossil fuel companies. Switching to renewable energy sources majorly hurts their bottom line. Further, unlike those who support action to prevent climate change, we have a clear money trail from fossil fuel companies to climate change deniers. It is undeniable that companies like Koch Brothers and Exxon Mobile have dumped millions of dollars into climate change denial. Further, the flow of money is not limited to think tanks and public groups. Many of the most prominent global warming denying climatologists have financial relationships with oil companies. A recent prominent example is Dr. Soon, who appears to not only have received funding from oil companies, but failed to report a conflict of interest in his publications (that’s a major taboo in science).

I want to be perfectly clear here. I do not personally think that all of these scientists are corrupt (though some of them likely are), nor do I think that we should automatically discredit their papers because they received some funding from oil companies, but I agreed to play by the anti-scientists’ rules, and their rule is “follow the money.” When we do that, we clearly see money going from oil companies to climate change deniers. In contrast, there is no clear financial benefit to creating a climate change hoax. Yes, scientists receive grant funding to study climate change, but there is no reason for governments to give out that money unless climate change is a real thing. Therefore, according to climate change deniers’ own rules, we should reject the “evidence” against climate change because of financial conflicts of interest.

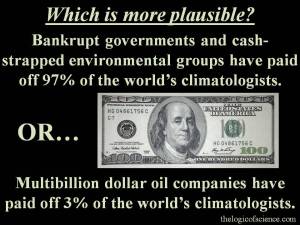

Finally, remember that roughly 97% of climatologists, and over 80% of the general scientific community agree that we are causing climate change. That’s an awful lot of people to pay off. So, the question that you really have to ask yourself is this: which actually seems more plausible, that bankrupt governments and cash-strapped environmental groups have paid off 97% of climatologists without any clear motive for doing so, or enormous and powerful oil companies have paid off 3% of climatologists in order to protect their bottom line?

GMOs

Anytime that the topic of GMOs arises all blame instantly gets placed on Monsanto. This company has been vilified to an utterly absurd degree, and most anti-GMO activists seem to be under the impression that it has a monopoly on our food supply. The reality, however, is that Monsanto isn’t that large. For example, in 2013 Monsanto’s net sales were worth $14.9 billion. To be clear, that’s a lot of money, but it’s hardly enough to buy a strong scientific consensus or to monopolize the food supply. It’s roughly the same annual net sales as Starbucks.

Perhaps the most telling number is, however, the annual net earnings of Whole Foods (one of the largest organic food chains). According to their own reports, Whole Foods earned a net of $12.9 billion in 2013. That’s only two billion less that Monsanto. Are you honestly going to tell me that two billion dollars is enough to pay off thousands of scientists from around the world? How exactly has an extra two billion dollars allowed Monsanto to monopolize the food supply?

Further, many people act as if Monsanto is the only player in the GMO world, but that is far from true. In fact, it’s not even the largest company involved. Cargill is much larger. Now, lest anyone suddenly jump over to saying that Cargill is the one paying off scientists, realize that Monsanto has always been the target of this conspiracy theory, so you can’t just switch over to Cargill in an attempt to save your argument (that would be a logically flawed tactic known as shifting the goal posts). Further, Cargill is only slightly larger than Koch Industries, and remember that oil companies like Koch and Exxon Mobile have been totally unable to purchase a scientific consensus, so it would be rather curious if Cargill had succeeded at that endeavor.

In addition to these large companies, there are numerous independent scientists, non-profit organizations, and smaller companies that research and create GMOs. In fact, the Bill and Melinda Gates Foundation has invested millions of dollars in GMOs. One would think that investments by a major humanitarian organization would demonstrate that GMOs aren’t just about evil corporations trying to take over the world, but in the minds of conspiracy theorists, this is nothing more than evidence that Gates is in fact a sinister man trying to depopulate the planet. The problem with that line of reasoning is, of course, that it commits an ad hoc fallacy (or possibly a question begging fallacy depending on how it’s worded). In other words, I would not accept that Gates is trying to commit genocide unless I had already accepted that GMOs are evil.

Finally, I want to look at who benefits from opposing GMOs. I’ve already pointed out that Whole Foods makes almost as much as Monsanto, but Whole Foods is not alone. There are numerous organic companies that make huge profits off of their products, and all of those companies have an enormous financial interest in smearing GMOs. Beyond the actual companies, there are plenty of individuals who make their living off of attacking GMOs and “Big Ag.” Vani Hari (a.k.a. Food Babe) is probably the most prominent of these activists. Her favorite response to critics is simply to call them “shills,” and she argues that we shouldn’t trust scientists because they are paid to do research on GMOs. The problem is that she makes her entire income off of her blog, store, books, talks, etc. So, by her own logic, we shouldn’t trust her since she has a financial conflict of interest.

Again, I do not personally think that we should ignore her because of her finances (we should ignore her because she’s full of crap). I think that she probably does truly believe the non-sense that she promotes, but my point is that if we are going to play this game of following the money, then we must acknowledge that many of the people opposing GMOs have a large financial interest in doing so. Yes, Monsanto makes billions of dollars and is a for-profit company, but Whole Foods makes almost as much and it is also a for-profit company. Yes, there are plenty of GMO supporters who get paid to do research on GMOs, but there are also plenty of GMO opponents who get paid for opposing GMOs. Therefore, if we are going to follow the money, it is, at best, a stalemate.

Vaccines

It is rare to talk to an anti-vaccer without them calling someone a “shill,” and perhaps their most common argument is that Big Pharma is covering up the truth about vaccines in order to make money. As I will demonstrate, however, this claim is completely erroneous.

The first problem is simply that vaccines aren’t worth that much to pharmaceutical companies. Skeptical Raptor did a fantastic job of explaining this, but to give a quick summary, vaccines are expensive to produce and cheap to purchase. In fact, many governments force pharmaceutical companies to provide them for free to lots of people. So even at a quick pass that doesn’t take into account costs like R&D, vaccines make up less than 2% of pharmaceutical companies revenue. Once you actually account for factors like the billions of dollars spent on vaccine research, you end up with around 2.5 billion dollars in annual profit from vaccines. Now, two and a half billion may sound like a lot, but remember that we are dealing with companies that make several hundred billion or even trillion dollars in a single year. With that type of cash flow, 2.5 billion is almost nothing, and it’s certainly not worth creating a massive global conspiracy that involves paying off tens of thousands of scientists and doctors from numerous universities and hospitals from every country in the world. The math just doesn’t add up.

Further, if pharmaceutical companies were really only after money, then they shouldn’t be producing vaccines because it costs far more to treat a disease than to prevent it. For example, one study found that it costs over $10,000 per person to treat measles. Another study estimated that it cost between 2.7 and 5.3 million dollars to treat 107 measles cases. For those playing along at home, that’s roughly 25–50 thousand dollars per case! In contrast, the measles vaccine only costs $19–50. So pharmaceutical companies could make way more money from treating measles than from preventing it (on a side note, most outbreaks are in fact caused by unvaccinated people, and you are far less likely to get a given disease if you have been vaccinated against it).

To further drive this point home, consider the fact that prior to the polio vaccine there were entire hospitals devoted to treating that one disease. Think about that for a minute, the vaccine made entire hospitals (complete with doctors, nurses, administrative staff, etc.) totally obsolete. A study of the costs and benefits of the polio vaccine estimate that by 2015, the polio vaccine will have saved $178 billion in the US alone. Please, tell me again how vaccines are worth billions of dollars to pharmaceutical companies. The numbers don’t lie. It’s cheaper to prevent a disease than it is to treat it.

Despite all of this evidence that vaccines aren’t in pharmaceutical companies best interests, many anti-vaxxers continue to insist that it’s all about the money, and a common claim is that all of the studies supporting vaccines were paid for by vaccine companies and conducted by scientists that work for those companies. On numerous occasions, I have provided an anti-vaxxer with a peer-reviewed study only to have them instantly reject it with a comment like, “why should I trust a study that was funded by Big Pharma?” To quote an unfortunately popular article by Natural Health Warriors, “vaccine safety trials are paid for by the very people who make the vaccines, so there is no possibility of the information being unbiased or truthful.” That’s about like saying, “the safety trials of Toyotas were conducted by Toyota, therefore there is no possibility that Toyotas are safe.” Nevertheless, looking beyond the patent absurdity of the “no possibility” clause, this claim simply isn’t true. Sure, vaccine companies have been behind some of the safety trials, but there have been plenty of trials conducted by independent scientists working off of grants that did not originate with pharmaceutical companies. Further, all scientific papers list their funding sources, the author affiliations, and any financial conflicts of interest. Half of the time when I see people blindly writing off a study as “biased,” they completely failed to look at this information. Therefore, I wanted to examine a small sample of the literature to see what type of pharmaceutical influences I could find.

I decided to take a quick look at the literature on vaccines and autism (since this is generally the number one safety concern I see people bring up). So, I chose 10 scientifically sound papers and looked at their funding and author affiliations (note: I only looked at their scientific content when selecting these 10 papers, I did not know anything about their funding or authors until after I had selected all 10; the papers are listed at the end of this post). These 10 papers were authored by 57 different researchers. Only seven authors were involved in more than one paper, and they only authored two of these papers each. These 57 authors were affiliated with 22 different organizations (note: I did not split up departments within organizations, there were, for example, several departments of the CDC). Twelve of the organizations were hospitals/universities (some were hospitals attached to a university, thus I lumped those categories), seven were from government organizations like the CDC (multiple countries were represented), and only three were from companies. Two of the companies were Abt Associates Inc. and Kaiser Permanente Northern California (the third was a health care company), and as far as I can tell, neither of these companies actually manufactures vaccines. They are certainly involved in vaccine research, but they aren’t pharmaceutical companies that are producing vaccines. Authors from those companies were only involved with two of the studies (Verstraeten et al. 2003 and Price et al. 2010). So not one of the 57 authors were actually employed by pharmaceutical companies.

Finally, let’s look at the grant agencies involved. I counted 15 granting agencies which ranged from organizations that were focused on autism research to massive groups like WHO and CDC. Of those 15 granting agencies, not one was a pharmaceutical company. Two studies (Smeeth et al. 2004 and Price et al. 2010) did, however, acknowledge potential financial conflicts of interest. Several (but not all) of their authors had previously received funding from vaccine companies for other projects. Nevertheless, those funds should not have affect these papers, and even if they did, that still leaves us with eight solid papers with no financial ties to vaccine manufacturers.

Now, inevitably someone is going to accuse me of having cherry picked these studies, but here’s the thing, you can test this yourself. You can get on PubMed or Google Scholar and look at the author affiliations and funding sources. You don’t have to take my word for it. Further, even if I cherry picked these, that still means that we have at least eight good, completely conflict free papers which found that vaccines were safe.

Additionally, these publications are not what we would expect if pharmaceutical companies were paying off scientists. Remember that there are lots of different companies that compete with each other. It makes absolutely no sense for these companies to pay off scientists to write yet another paper saying that vaccines don’t cause autism. If you haven’t believed the last 100 papers, why should we expect number 101 to make a difference? Rather, if there was a massive conspiracy, it would make sense to target the people who actual care about the scientific literature. In other words, rather than making broad statements about the safety of vaccines, they should claim that the vaccines manufactured by company X are safe, whereas the vaccines by other companies are dangerous. If vaccine companies have scientists in their pockets, then we should see a war between companies about whose vaccines are safe. Think about the logic here for a minute. Anti-vaccers already think that vaccines are dangerous, so they don’t matter, but those of us who care about the literature are going to be very interested in seeing that some companies are safer than others. If, for example, several studies came out showing that vaccines made by GlaxoSmithKline were dangerous but vaccines made by Merck were safe, I would absolutely demand that my vaccines came from Merck, and so would tons of other scientifically minded people. That is what we would expect a conspiracy to look like. Drug companies should be fighting with each other. Instead, we simply see paper after paper showing over and over again that vaccines are safe, regardless of what company they came from.

Finally, I again want to flip the situation around and look at the finances of the people who oppose vaccines. Unlike many of the scientists doing actual research on vaccines, anti-vaccers often have clear conflicts of interest. Most famously, Andrew Wakefield (the man who started the myth that vaccines cause autism) has been found guilty of falsifying data and receiving money from lawyers who were intent on suing vaccine companies. Nevertheless, many anti-vaccers still follow Wakefield and argue that pharmaceutical companies are simply trying to silence him. Consider for a minute how absolutely fantastic this double standard is. Anti-vaxxers consider anyone who opposes them to be a shill, and they repeatedly insist that we have to follow the money, but when we actually follow the money and clearly demonstrate that Wakefield was being paid off, they suddenly ignore their own rules and claim that Wakefield is a hero who is being silenced for telling the truth. It’s as beautiful a case study in ad hoc logic and inconstant reasoning as I’ve ever seen.

Wakefield is admittedly an extreme example, but many other less extreme cases exist. For example, have you ever stopped to follow the money on the anti-vaccine blogs and web pages that pollute the internet? If you haven’t, you should, because most of the major ones include a store selling the products that you supposedly should use instead of vaccines. GreenMedInfo, Natural News, Mercola, Modern Alternative Mama, etc. all have stores selling their products and books. Similarly, famous anti-vaccine doctors like Sherri Tenpenny make quite a bit of money off of their books, speaking tours, etc. This is true for much more than just vaccines. You find this pattern throughout the amorphous mess that is alternative medicine, and it’s actually a brilliant business strategy when you think about it. First, you scare people about the horrors of vaccines and traditional “western” medicines. Then, you tell them about some amazing “natural” product that Big Pharma doesn’t want you to know about because it can cure everything from measles to infertility. Finally, you direct them to your store which just happens to sell that miracle product. There’s clearly no conflict of interest there (note the immense sarcasm).

My point in all of this is really quite simple: if we accept anti-scientists’ logically invalid challenge to follow the money, things end very badly for the anti-scientists. Decisions need to be made based on facts, not the people who support those facts, but if we agree to simply play by the anti-scientists’ rules, then we find a lack of motive for scientists to falsify data and strong financial motivation for anti-scientists to invent fictional conspiracies and oppose science. If anti-scientists actually followed their own rules, they would avoid most of the pages and blogs that they so dearly love to read and repost.

List of grant agencies

- America’s Health Insurance Plans

- Autism Speaks

Centers for Disease Control and Prevention - Danish National Research Foundation

- Harvard Medical School

- Health Resources and Service Administration

- Kaiser Permanente Northern California

- Medicines Control Agency

- National Alliance for Autism Research

- National Institute of Mental Health

- National Vaccine Program Office and National Immunization Program

- Statens Serum Institute, Copenhagen, Denmark

- UK Medical Research Council

- University of California Los Angeles

- World Health Organization

List of author affiliations

- Abt Associates Inc.

- Centers for Disease Control and Prevention

- Danish Epidemiology Science Center

- Department of Statistics, Open University

- Group Health Cooperative of Puget Sound, Seattle, Washington

- Harvard Pilgrim Health Care Institute, Harvard Medical School

- Health Protection Agency, Communicable Disease Surveillance Centre

- Immunization Division, Public Health Laboratory Service Communicable Disease Surveillance Center

- Immunization Safety Office

- Institute of Psychiatry, Kings College, London

- Juntendo University School of Medicine

- Kaiser Permanente Northern California, Oakland, California

- London School of Hygiene and Tropical Medicine, London, UK.

- McGill University, Montreal Children’s Hospital, Canada

- Morbidity and Health Care Team, Office for National Statistics, London, United Kingdom.

- National Center for Chronic Disease Prevention and Health Promotion

- Otsuma Women’s University

- Royal Free Campus, Royal Free and University College Medical School, University College London

- Statens Serum Institute, Copenhagen, Denmark

- University of Washington

- Whiteley-Martin Research Centre, Discipline of Surgery, The University of Sydney, Nepean Hospital

- Yokohama Psycho-Developmental Clinic